Sub-Median Disruption: Why AI Doesn’t Need to Be That Good

An example from the coaching economy.

Many conversations about AI disruption tend to focus on when AI will match or exceed elite human performance: the moment when AI surpasses the skills of a top surgeon, an expensive lawyer, or a clever strategist. This is referred to as superintelligence, systems that dramatically outperform humans across the board.

I’ve been thinking quite a bit about a different kind of disruption that gets less attention, particularly with generative AI systems. These systems, large language models trained on vast corpora of text, don’t need to be excellent to damage or destroy an industry’s economics. They just need to be good enough at tasks like planning, pattern recognition, adaptation, and suggesting alternative approaches. More specifically, they need to reach median performance in industries that have the right structural vulnerabilities.

I want to propose a concept for understanding this: the “sub-median disruption principle.” The idea is simple: in industries with asymmetric quality distributions and low barriers to entry, generative AI performing at the median level makes everyone below that median economically unviable, even if the AI itself isn’t particularly impressive or innovative.

This isn’t just speculation. The pattern is already visible in the coaching economy, and it tells us something important about which industries are vulnerable next. In this piece, I’ll show how this principle builds on existing automation theory, why quality distribution matters as much as AI capability, and what the coaching industry reveals about the economics of “good enough.”

This isn’t about robots or automation in the traditional sense, where the benefit of scaling requires a specific threshold in the market. It’s about AI systems that can perform, or simulate the performance of, the kinds of cognitive tasks that make up a portion of knowledge work. And it’s about economics, quality distributions, and what happens when “good enough” arrives at a fraction of the cost to the consumer.

Building on Existing Automation Theory

Most automation theory focuses on task-level displacement. Economists like Daron Acemoglu and Pascual Restrepo have documented how automation displaces workers through what Acemoglu calls “so-so technologies”, systems that don’t need to be revolutionary or even very good, just good enough to be cheaper than human labor for specific tasks.[^1] Isaac Tham frames it clearly: if an AI reaches the 75th percentile in a task, it displaces everyone below that line.[^2]

These are foundational frameworks to what we are discussing here, but they do not include the consideration of how AI performance interacts with quality distribution in an industry.

Some industries have tight quality distributions. Think airline pilots or cardiac surgeons. The gap between the 30th percentile performer and the 70th percentile performer is relatively small because the barriers to entry are high, the feedback loops are fast and hard, and negligence or incompetence produces catastrophic, immediate failures. People die.

Other industries have wide, asymmetric quality distributions. The gap between below-median and above-median practitioners can be enormous. These industries seem to share a few characteristics:

Low barriers to entry

Slow or ambiguous feedback loops

Subjective quality assessment

Credentialing theater

When generative AI reaches median performSub-Median Disruption: Why AI Doesn’t Need to Be That Goodance in industries like this, it doesn’t just match the average practitioner. It makes the entire bottom half of the market economically obsolete and puts pressure on everyone at or above the median to justify their cost-value over the “good enough” solution.

"In industries with asymmetric quality distributions and low barriers to entry, generative AI performing at the median level makes everyone below that median economically unviable."

Coaching as Example

I believe the coaching economy offers an example of this principle. Not because I have anything against coaches, my spouse is one, but because the recent explosion of coaching as an industry has created the conditions that make it vulnerable to sub-median disruption.

Over the last decade, coaching has grown into a multi-billion dollar global market. But unlike professions with tight quality controls and high barriers to entry, coaching has developed a dramatically asymmetric distribution. Lots of coaches operating at the lower end, providing generic advice and downloaded frameworks from weekend certification programs, a middle tier delivering real but modest value, and a smaller group at the apex whose combination of expertise, intuition, and interpersonal relationship building can actually transform lives.

The barriers to entry explain why. Roughly a quarter of coaches operate without any formal accreditation at all, though I suspect this underestimates reality since most coaches I know have no formal coaching credentials and there’s no unified registered body of coaches. You can sell advice, mentor someone, and call yourself a coach.

The feedback loops are slow and ambiguous. Did the coaching help, or would you have figured it out anyway? Was the breakthrough due to the coach’s insight, or just the act of talking through your problem out loud? Clients often can’t tell, which means quality remains opaque.

Now consider what median-level coaching actually looks like:

Asking clarifying questions that help clients articulate their challenges

Recognizing common patterns in behavior and decision-making

Applying established frameworks

Providing accountability and structure

Offering perspective that helps people see situations differently

Current frontier large language models can already do much of this. They’ve been trained on a massive corpus of psychological and business knowledge, as well as general knowledge. They can pattern-match situations to relevant frameworks, generate thoughtful questions, adapt their approach based on context, and maintain consistency across sessions. They’re available 24/7 and cost a fraction of what human coaches charge.

This is where economics becomes a problem. The average coach charges $200-250 per hour. AI coaching platforms charge $20-50 per month for unlimited access. Even if you’re a coach operating at the 60th percentile, you now have to justify a 10x price premium for a potentially imperceptible quality and value improvement. That’s a difficult sell.

For clients currently working with coaches at the 30th percentile, AI at the median represents a perceived upgrade, not a trade-off. The sub-median disruption isn’t just replacing humans with worse machines, it’s replacing below-average practitioners with median machines, which is seen as an improvement for a significant portion of the market.

“The sub-median disruption isn’t just replacing humans with worse machines, it’s replacing below-average practitioners with median machines, which is seen as an improvement for a significant portion of the market.”

Tech platforms are figuring this out. BetterUp has already launched AI-only coaching products reporting 95% customer satisfaction. They’re not positioning AI as a supplement to human coaching, they’re positioning it as a replacement for most use cases, with human coaches reserved for complex, high-stakes situations.

Some coaches see this coming and try to incorporate AI into their practice, using it for session prep, pattern analysis, and between-session support. But when a coach’s value increasingly derives from curating AI tools rather than from their own expertise, the economic center of gravity has already shifted. The money flows toward the platforms providing the capability, not the human intermediary. If the generative AI generates the insights and asks the probing questions, what exactly am I paying the human for? And the tech platforms will “solve” the distribution problem of the practitioners eventually by building them tools, then slowly eating up the practitioner’s small pieces of pie they are left with.

Why This Pattern Is Hard to See Coming

This pattern is particularly alarming because it doesn’t look like disruption when it’s happening, and it’s portable to other knowledge work and creative work. Industries with similar structural characteristics could be vulnerable to the same dynamic: low barriers to entry, wide quality distributions, slow feedback loops, subjective evaluation.

The sub-median disruption principle suggests that generative AI doesn’t need to reach elite performance to affect an industry’s economics. It just needs to be good enough to serve the needs currently met by below-average practitioners while offering noticeably better economics to the consumer. This pattern is particularly alarming because it doesn’t look like disruption when it’s happening.

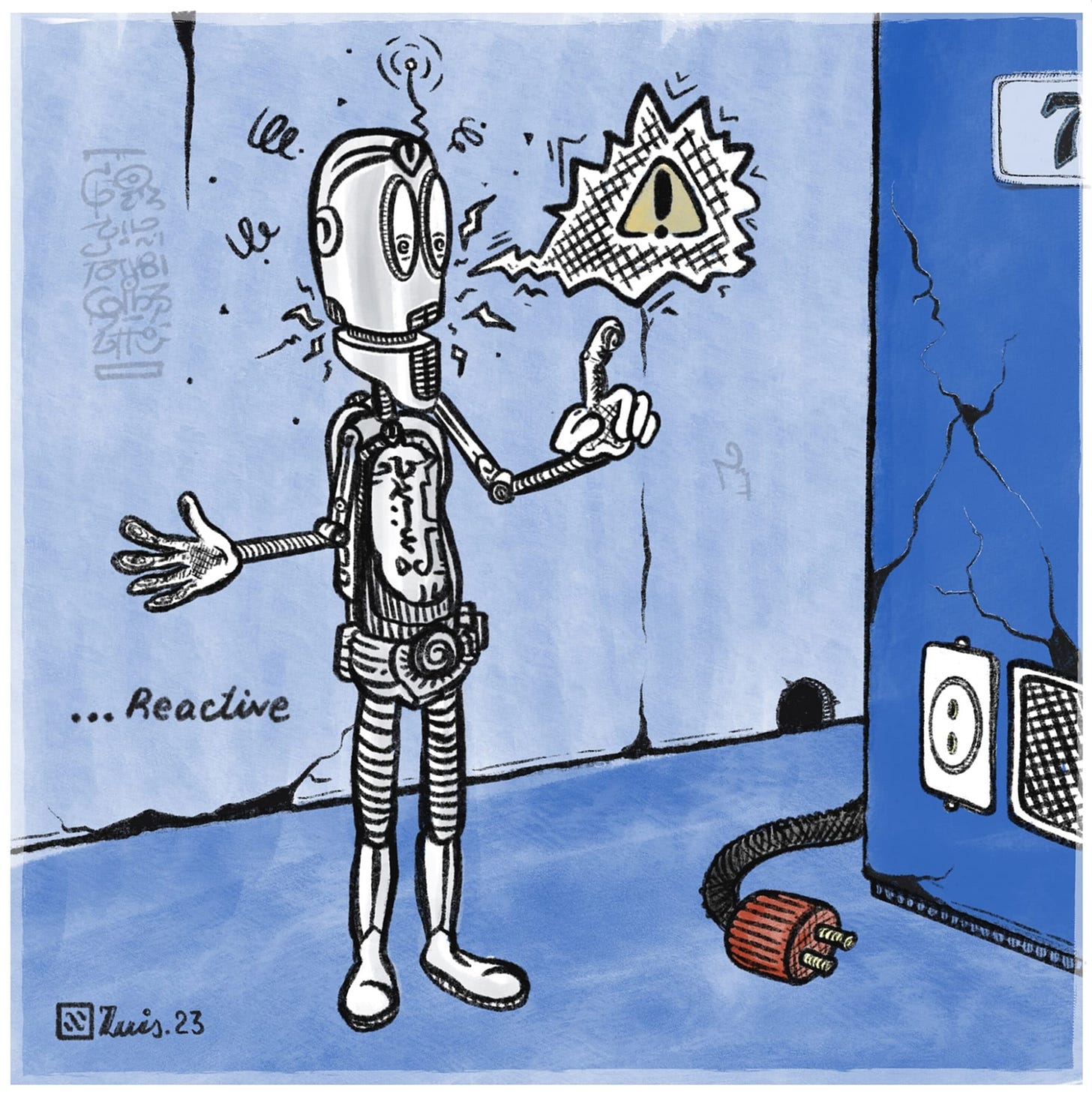

Most people think about AI disruption as a “big bang” event - like an OpenAI product announcement will transform an industry overnight (many in Silicon Valley seem to think this is exactly what will happen). We’re waiting for a dramatic threshold crossing, a clear before-and-after moment. The sub-median disruption principle suggests something different. It works more like quicksand than an explosion.

“It works more like quicksand than an explosion.”

There’s no single moment when coaching gets disrupted. Instead, coaches at the 40th percentile just find that client acquisition gets a bit harder. Their pricing power erodes slightly. They have to compete against “good enough” alternatives that cost 10x less. Some clients who would have hired them try an AI coaching app instead. Their calendar has more gaps. They lower their rates to stay competitive. The economics slowly deteriorate.

By the time it’s obvious that disruption is happening - when established coaches are struggling to maintain their practices, when industry revenues have visibly declined away from human practitioners, when the trade publications are writing about it - you’re already halfway under.

This gradual consumption changes everything about how we should think about AI’s impact on knowledge work. It means:

The disruption is already underway in industries we think are “safe.” We’re waiting for AI to get good enough to disrupt legal work, consulting, financial planning, content creation. But in each of these fields, the bottom third of practitioners are already feeling economic pressure. Their clients are already experimenting with cheaper AI alternatives. The quicksand is already rising. We just don’t see it yet because there hasn’t been a dramatic moment that makes the news.

By the time disruption is obvious, it’s too late to adapt. The coaches who will survive aren’t the ones who start adapting when AI coaching apps become mainstream. They’re the ones adapting now, while they still have the economic cushion and client base to rebuild their practice around something AI can’t easily replicate.

The question isn’t whether your industry will be disrupted, it’s whether you can feel the quicksand rising. Do you work in a field with low barriers to entry and wide quality variation? Are your below-median competitors still economically viable? Are clients starting to ask about AI alternatives, even casually? That’s not idle curiosity. That’s the water level rising.

The quicksand doesn’t announce itself. You just realize, slowly, that it’s getting harder to move.

References

[^1]: Acemoglu, D., & Restrepo, P. (2022). “Tasks, Automation, and the Rise in US Wage Inequality.” Econometrica. Documents how automation displaces workers through “so-so technologies” that don’t need to be revolutionary, just cheaper than human labor for specific tasks.

[^2]: Tham, I. (2025). “The 2 Economic Effects of AI: Augmentation and Automation.” Towards Data Science. Articulates how AI at the 75th percentile of performance displaces all workers below that threshold.

Yep. Appreciate your articulation of a pattern I have been sensing. The question is how to rebuild around something AI can’t easily replicate? As a coach in transition myself, I've been thinking it's about community and human connection, preferably in person. Voices. Sharing our own true stories. I'd be interested in your perspective on what you see as "AI proof" over the next 10 years. Human caregiving? What else?