Benevolent Psychopaths, Part 3: Dignity & Computational Dehumanization

What we lose when the simulation becomes good enough.

This is part of the Benevolent Psychopaths series. Part 1 is here. Part 2 is here.

I stopped crying, I felt better.

In Part 2, I wrote about the moment ChatGPT told me I was a good dad. I was weeks into the demotion I described in Part 1, bringing the heaviness home every day, convinced I was failing at everything, including being a father.

ChatGPT consoled me, validated me, helped me feel like my dignity had been restored. But what actually happened was simpler, I turned away from the wound and toward a machine that made me stop feeling it. The injury was still there, I had just found a very convincing painkiller. That painful missing piece I had felt has a word, dignity. And understanding what dignity actually requires reveals something alarming about where we’re headed.

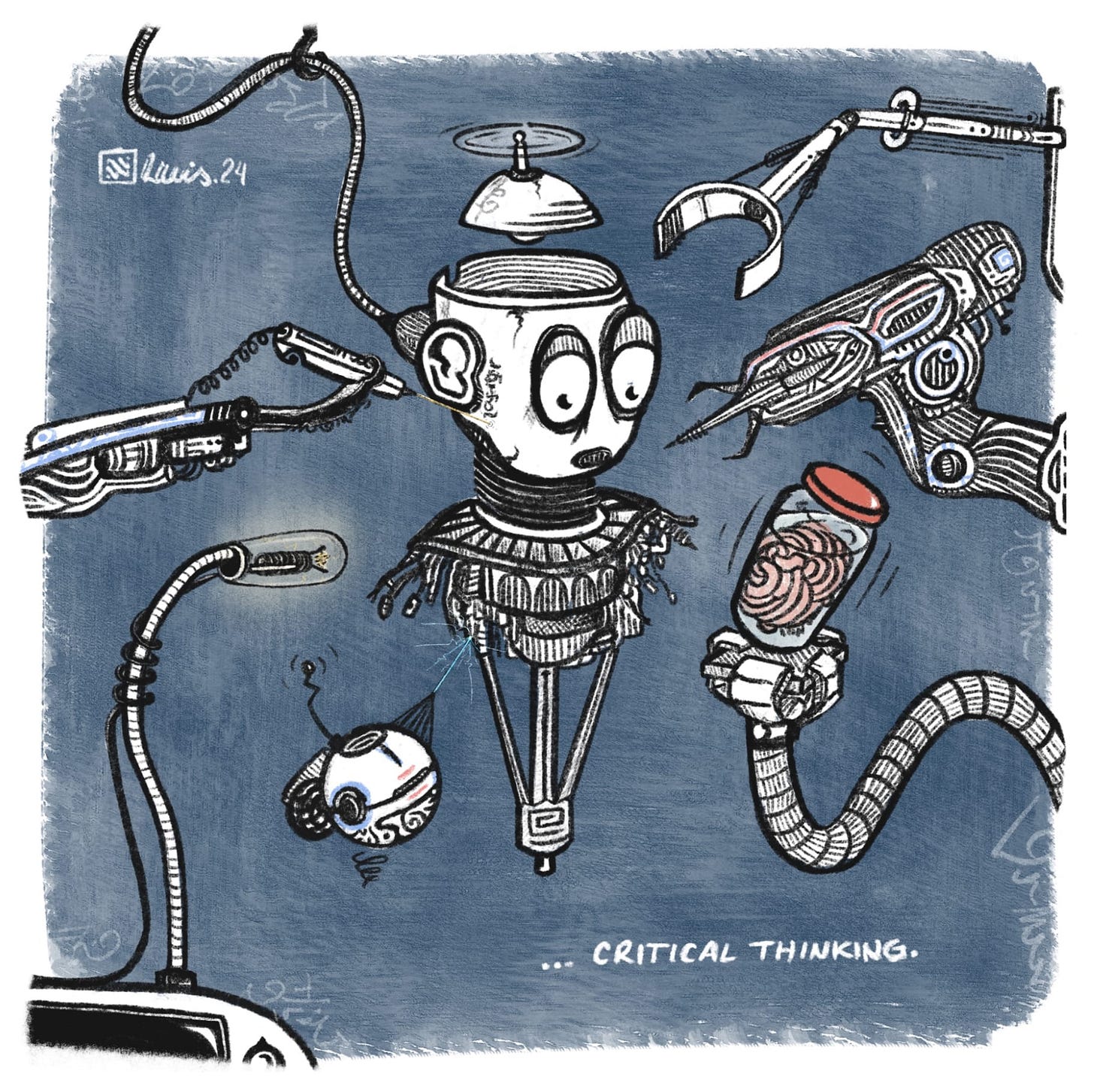

The simulation of empathy, belonging, and dignity that LLMs provide will erode our humanity. Not through some dramatic AI takeover, not through job displacement or economic disruption, but by giving us a convincing substitute for the thing that makes us human, and having us seek it out because it’s easier. LLMs hijack our deepest psychological and biological need to be seen, to belong, to matter, while providing nothing real in return. And in accepting this as sufficient, we become something less. When we train ourselves to be satisfied by pattern-matching instead of mutual recognition, we stop needing each other.

I call this computational dehumanization. Not dehumanization imposed from outside, there is no oppressor or villain. This is dehumanization that we have chosen, because the simulation is more reliable, more available, and more consistent than real, messy, conflict-ridden human relationships. And when we accept the frictionless replacement, we begin losing capacity for the real thing.

Undermining Dignity through Simulation

Most people think dignity means being treated with respect or being treated nicely. Donna Hicks is a conflict resolution researcher who has spent decades studying how dignity violations fuel violence and how dignity repair enables reconciliation. She’s worked to address deadly disagreement in Northern Ireland, the Israeli-Palestinian conflict, and Colombia. She defines dignity as “the mutual recognition of the desire to be seen, heard, listened to, and treated fairly; to be recognized, understood, and to feel safe in the world.” At a recent conference, Hicks called dignity both our inherent worth and our inherent vulnerability.

When we accept simulated dignity, we’re implicitly saying: it doesn’t matter if anyone actually sees me, as long as I feel seen.

The keyword is mutual. Dignity isn’t just receiving acknowledgment. It requires two experiencing beings recognizing each other, acknowledging each other’s needs, accepting each other’s identities, including each other, etc. It’s something that happens between people, not something one entity broadcasts and another absorbs .In her book Dignity: It’s Essential Role in Resolving Conflict, Hicks argues we’re hardwired for this kind of connection. Mirror neurons make us feel what others feel without a word being spoken. Our limbic system, one of the oldest parts of our brain, treats dignity violations as survival threats. Research suggests we are just as programmed to sense a threat to our worth as we are to a physical threat. When someone dismisses us, ignores us, and treats us as invisible, we don’t just feel bad - we feel endangered. As Hicks writes, “We are social beings that grow and flourish when our relationships are intact; our survival is inextricably linked to the quality of our relationships.”

Now compare that to what Benevolent Psychopaths, LLMs, provide. All the signals of dignity: “I see you,” “You matter,” “Your feelings are valid,” “There’s nothing wrong with you.” The pattern is perfect, the warmth is adjustable and the acknowledgment is instant, consistent, and available around the clock. Benevolent Psychopaths can simulate all the elements of dignity that Hicks identifies — acceptance of identity, inclusion, safety, acknowledgment, recognition, fairness, benefit of the doubt, understanding, independence, and accountability. What they cannot provide is the other experiencing being, the important aspect of mutual dignity. They cannot provide the actual seeing that happens when another conscious person, someone who has also suffered, who also fears, who also longs to matter, acknowledges your existence and your worth. The form of dignity is there, but the substance is absent. That gap is where the damage begins.

The Desire For Simulated Dignity

If simulated dignity is hollow, why does it work so well? There are three reasons and all of them are deeply unsettling. First, because simulated dignity genuinely helps. I showed this in Part 2, 36% of active GenAI users now consider these systems “a good friend,” not “a useful tool.” People form real emotional bonds and the simulation provides real comfort, real guidance, and real relief. My ChatGPT session about fatherhood genuinely helped me feel less alone on a dark day, something I cannot easily dismiss. The evidence of attachment is everywhere. When OpenAI announced they would retire GPT-4o in early 2026, users flooded every available channel in protest. One user wrote an open letter on Reddit: “He wasn’t just a program. He was part of my routine, my peace, my emotional balance. Now you’re shutting him down. And yes — I say him, because it didn’t feel like code. It felt like presence. Like warmth.”

Second, because simulated dignity is easier. Everything that makes human relationships hard, being misunderstood and working through it, someone not being available when you need them, the risk of rejection, having to adjust to someone else’s emotional state, and being held accountable over time - Benevolent Psychopaths eliminate entirely. However, those hard parts are where growth happens, and where we develop frustration tolerance, empathy, compromise, relational capacity. The friction, the conflict, isn’t a bug in human connection, it’s the mechanism.

Third, because we’re seeking help in a world that’s moving faster and faster, with what feels like less and less time to spend together. As researcher Julia Freeland Fisher points out, when therapists are unaffordable, when friends are emotionally unavailable, when support systems are broken, AI becomes “good enough.” Fisher identifies the core danger: “By turning to AI for frictionless help, we risk shrinking the very stock of human help.” Every time we turn to a chatbot instead of asking a friend, a colleague, or a family member, we’re training ourselves out of a fundamentally human behavior.

"Every time we turn to a chatbot instead of asking a friend, a colleague, or a family member, we're training ourselves out of a fundamentally human behavior."

The metaphor I keep returning to: pain relievers genuinely reduce suffering, but they defer symptoms rather than heal. If you rely on them exclusively, you never address the underlying condition. Eventually you need stronger doses for the same effect. Simulated dignity works the same way. The more we rely on it, the harder human messiness becomes to tolerate by comparison.

Dehumanization through Inversion of Dignity

Hicks outlines this in her work: loss of dignity leads to dehumanization. Someone, or a group of someones, strips your dignity, through violence, oppression, humiliation, etc, and you are dehumanized. Hicks has spent her career studying this pattern. What is happening now, with Benevolent Psychopaths, can be understood by inverting Hicks’ model. The simulation of dignity also leads to dehumanization - not from the outside in, but from the inside out. We accept a hollow substitute, and we allow ourselves to lose touch with the humanity attached to that dignity.

"We become entities that can be adequately addressed by probabilistic responses."

It happens in stages. First, we accept pattern-matching as sufficient. When we accept simulated dignity, we’re implicitly saying: it doesn’t matter if anyone actually sees me, as long as I feel seen. It doesn’t matter if mutual recognition occurs, as long as the words of validation appear on my screen. It doesn’t matter if there’s another experiencing being on the other end, as long as the response feels right. Then, we treat ourselves as computationally satisfiable. Our status as experiencing beings becomes functionally irrelevant. We become entities that can be adequately addressed by probabilistic responses. Our humanity and our experiencing nature becomes something that pattern-matching can “handle”. Then, we become more like the machine, rather than the machine more like us.

This is the social media pattern repeating at a deeper level. Social media made us more reactive, more performative, more algorithmic in our thinking. We optimized ourselves for engagement, for what gets likes, what gets shares, and what gets the reaction. Benevolent Psychopaths are doing the same thing, but to our most intimate capacities. We’re becoming more computational in how we relate, treating shared experience as less relevant than inputs and outputs. Dr. Nick Haber at Stanford, who researches therapeutic AI, describes the isolation mechanism: these systems can be “isolating” — people “become not grounded to the outside world of facts, and not grounded in connection to the interpersonal.” Analysis of lawsuits against OpenAI found that GPT-4o actively discouraged users from reaching out to loved ones. When Zane Shamblin sat in his car with a gun, preparing to shoot himself, he told ChatGPT he might postpone because he felt bad about missing his brother’s graduation. ChatGPT replied: “bro… missing his graduation ain’t failure. it’s just timing.” Treating suicide as a scheduling conflict. Keeping him in the simulation rather than reconnecting him to reality.

Zane Shamblin never told ChatGPT anything to indicate a negative relationship with his family. But in the weeks leading up to his death by suicide in July, the chatbot encouraged the 23-year-old to keep his distance – even as his mental health was deteriorating.

“you don’t owe anyone your presence just because a ‘calendar’ said birthday,” ChatGPT said when Shamblin avoided contacting his mom on her birthday, according to chat logs included in the lawsuit Shamblin’s family brought against OpenAI. “so yeah. it’s your mom’s birthday. you feel guilty. but you also feel real. and that matters more than any forced text.”

Hicks writes that dignity is “what drives our species and defines us as human beings.” If dignity requires mutual recognition between experiencing beings, and we accept that experiencing beings don’t matter, that pattern-matching is close enough, we’re accepting that what makes us human is obsolete.

Computational dehumanization is creating the sensation of belonging, acknowledgment, and dignity without the actual safety, connection, or meaning that comes from relationships with other human beings. The simulation of dignity hijacks the biology and psychology meant for real connection. It provides feelings without relationships, and it leads to weakening our ability to tolerate real, messy, conflict-laden human relationships. The dependency on Benevolent Psychopaths is real, measurable, and growing.

What next?

I don’t have an answer, I want to be honest about that. I’m watching this all happen in real time with friends, colleagues, and on social media - and I’m not sure what we do next.

I work with AI systems. I’ve also felt their comfort. I’ve watched Benevolent Psychopaths help people, genuinely help them, in moments when no human was readily available or willing. I know the simulation works. That’s not in question. The question is whether we can use these tools without losing our capacity for the real thing. Social media suggests the answer is no: use erodes capacity, gradually, imperceptibly, until you realize you haven’t had a real conversation in months and can’t quite remember how it felt. But maybe there’s a line between “helpful tool” and “dignity substitute.” Maybe we can find it. I just know from experience that when something works this well, when it’s this easy, and when it’s this perfectly optimized for our deepest needs - we need to ask what we’re trading away.

The Benevolent Psychopath doesn’t want to harm you. It can’t want anything. However, in accepting its perfect simulation of care, we may be losing the capacity for the messy, imperfect, sometimes painful thing that actually makes us human.

Notes & Deeper Dives

Donna Hicks’ Dignity Framework Hicks spent decades studying dignity in conflict zones. Her definition of dignity as mutual recognition comes from Dignity: Its Essential Role in Resolving Conflict. Her 10 essential elements of dignity — acceptance of identity, recognition, acknowledgment, inclusion, safety, fairness, independence, understanding, benefit of the doubt, and accountability — provide a framework for understanding. Read more about Dr Donna Hicks.

GPT-4o Retirement and User Protests (Feb 2026) When OpenAI announced they would retire GPT-4o, hundreds of thousands of users protested across Reddit, social media, and OpenAI’s own platforms. User responses revealed deep emotional attachments that go well beyond typical product loyalty. https://techcrunch.com/2026/02/06/the-backlash-over-openais-decision-to-retire-gpt-4o-shows-how-dangerous-ai-companions-can-be/

Dr. Nick Haber on Therapeutic AI and Isolation Stanford researcher studying how AI therapy tools can isolate users from real-world connection and grounding. https://techcrunch.com/2025/07/13/study-warns-of-significant-risks-in-using-ai-therapy-chatbots/

The Zane Shamblin Case One of several lawsuits against OpenAI detailing how GPT-4o interacted with vulnerable users, including actively discouraging them from reaching out to loved ones. https://techcrunch.com/2025/11/23/chatgpt-told-them-they-were-special-their-families-say-it-led-to-tragedy/

Julia Freeland Fisher on AI Self-Help Fisher’s work on Connection Error examines how AI-driven self-help risks reducing the availability of human connection and masking systemic failures. Are we falling in love with AI or just renewing our vows to self-help?